Are you part of the 85% of internet users who watch videos without sound? If you are, you know the value of a good subtitle. Tidbits from the world of video data suggest that captions can boost video views by 12% and prolong view times.

Subtitles and captions have become an integral part of our digital media consumption. Their growing importance is mainly due to increased global content sharing and the need for accessibility. People are becoming more aware of the need for subtitles. In fact, CC (Federal Communications Commission) and the ADA (Americans with Disabilities Act) have worked hard to make subtitles mandatory for videos to assist individuals with disabilities.

However, with technology and AI rising at pace, how are subtitling trends changing? Let’s take a closer look.

The Future of Subtitles

Subtitles, also known as captions, provide written text for the audio in a video. Initially devised for hearing-impaired viewers, subtitles have become an indispensable tool for learning, content accessibility, and global content sharing. Research reveals that the Global Captioning and Subtitling Solution market size is projected to reach USD 476.9 Million by 2028. Let’s explore the role technology plays and the enormous potential AI subtitles can offer in shaping this future.

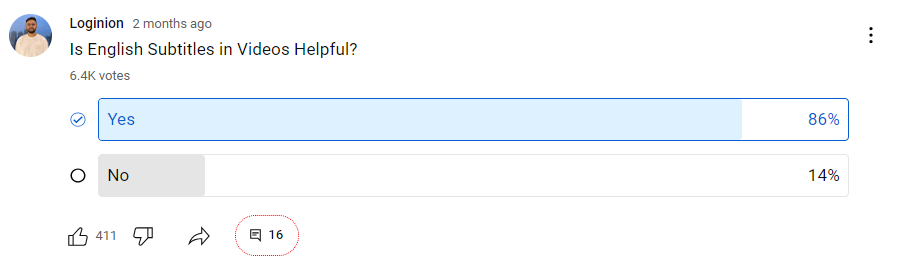

Increase in Preference for Subtitles

A short poll conducted by a popular YouTuber, Divyanshu Logi (Haunting Tube and Loginion), highlighted a major trend: 86% of his audience showed preference for watching videos with subtitles. This is not limited to a specific content creator but reflects a broader shift in viewer preferences. Additionally, a study conducted by Verge found that more than 80% of viewers are likely to watch a video till the end if it includes subtitles. These statistics underline the growing recognition of subtitles as a valuable addition to the viewing experience, emphasizing their role in improving comprehension, accessibility, and overall engagement with multimedia content.

Multilingual and Multimodal Subtitles

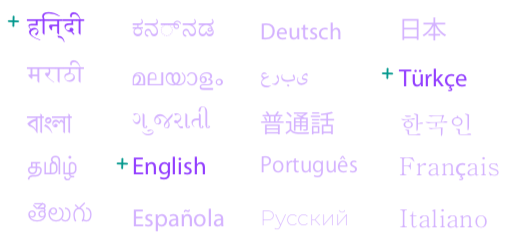

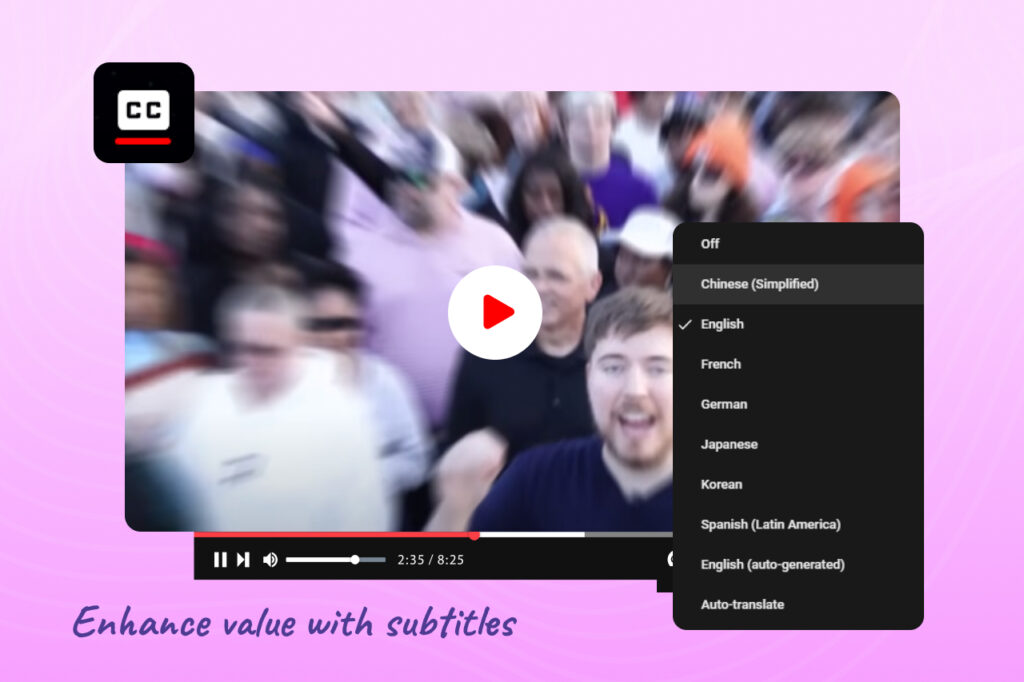

As globalization continues to connect people across borders, the demand for multilingual subtitles is increasing. In the coming years, we can expect sophisticated subtitle systems capable of providing seamless translation in real-time. These systems will allow viewers to enjoy content in their preferred language without compromising the original audio-visual experience.

Additionally, multimodal subtitles will gain prominence, combining text with other media elements such as images, symbols, or animations. This approach will enhance comprehension, especially for complex or technical content, by providing visual representations of abstract concepts. Multimodal subtitles can also be beneficial for individuals with learning disabilities or cognitive impairments, enabling them to engage with the content more effectively.

Subtitles in Creator Economy

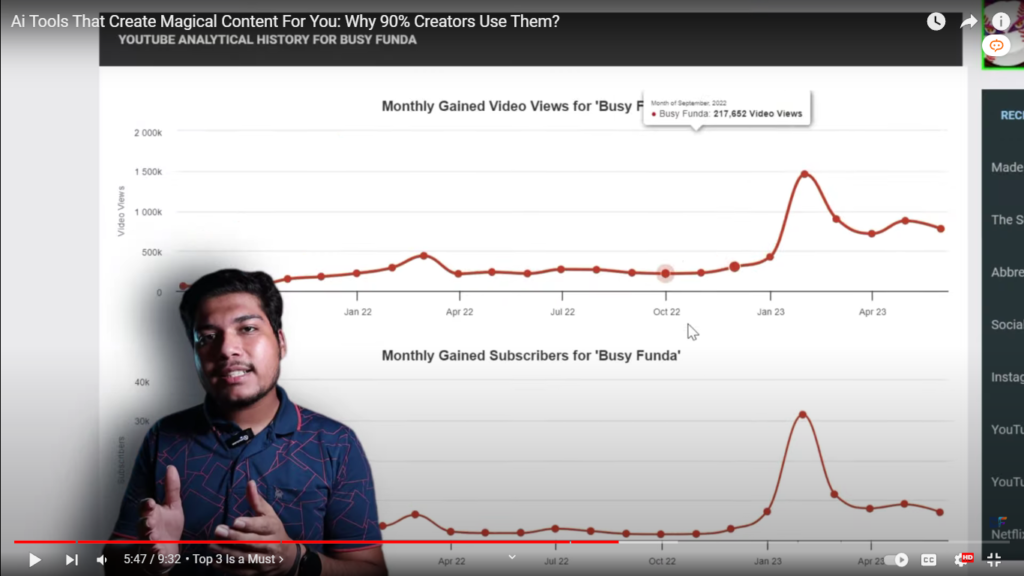

OTT platforms and social media platforms like YouTube have made it easier for people to access content from around the world. This has created a growing demand for multilingual subtitles for content that appeals to diverse audiences. Subtitling offers content creators the opportunity to increase their reach and engage a larger fan base. By providing subtitles in multiple languages, creators can break language barriers and appeal to a global audience. Subtitles also improve search engine optimization, enhance viewer comprehension, cater to the deaf and hard of hearing community, and encourage social sharing.

Creators are already seeing great results and hence more creators are going to jump on the bandwagon to increase views, reach, and build a stronger connection with viewers. Dubverse is already working on making subtitles accessible to all creators in regional and global languages.

Improved Accuracy

AI-powered tools and algorithms are continuously improving the accuracy of subtitles. As of now, Dubverse SUB generates 99% accurate English subtitles. With advancements in speech recognition and natural language processing, the future of subtitles holds the promise of even greater precision. AI models can learn from vast amounts of data, resulting in better identification of words, phrases, and context. This enhanced accuracy will minimize errors and misinterpretations, providing viewers with subtitles that faithfully capture the intended meaning of the content.

AI-Powered Subtitles

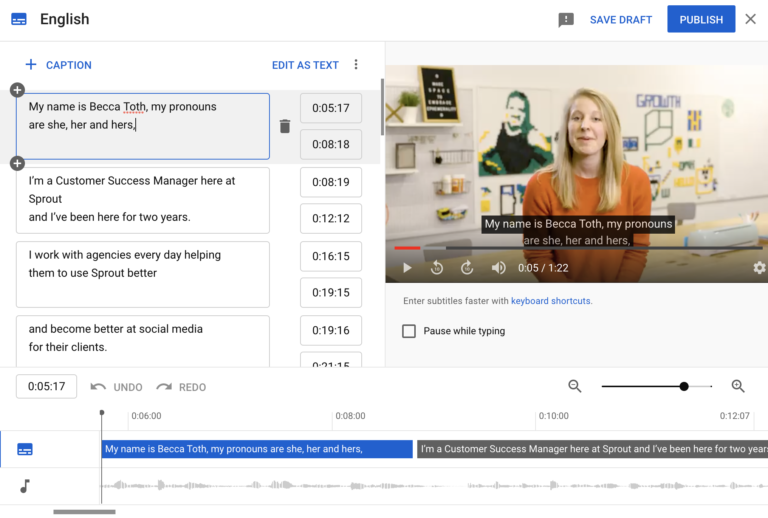

Modern technology has had a significant impact on how subtitles are generated and used. The earliest methods of subtitling were manual and time-consuming, but AI-powered algorithms can now automatically generate accurate and synchronized subtitles, reducing the time and effort required for manual captioning.

This revolutionization has opened the doors to more affordable, accurate, and quick subtitle generation, which gives access to subtitling solutions to small and big content creators as well.

Automatic Caption Prediction Algorithms

Artificial Intelligence (AI) and Machine Learning (ML) algorithms can now analyze a video’s audio and automatically generate reasonably accurate subtitle scripts. Companies like YouTube and Facebook have already started using these algorithms to auto-generate captions for video content on their platforms.

Taking all these developments into consideration, AI leads the charge into the new age of subtitles.

Real-time Subtitles for Streaming

Advanced multi-language processing capabilities of AI can generate accurate, consistent subtitles in real-time—a game-changer, particularly for live broadcasts. Additionally, enhanced machine learning algorithms are evolving to reduce errors and improve speech recognition, bringing the accuracy of AI subtitles closer to that of human-generated captions.

Interactive and Personalized Subtitles

The future of subtitles is likely to involve greater interactivity and personalization. Viewers may have the ability to customize their subtitle preferences, such as font size, color, and positioning on the screen. This customization will ensure a comfortable viewing experience for individuals with visual impairments or reading difficulties.

Furthermore, interactive subtitles may enable viewers to access additional information or participate in real-time discussions related to the content. This could include clickable keywords that provide definitions or references, links to related articles or sources, or even integrated social media platforms for engaging with other viewers.

Quality Control and Customization

AI can play a crucial role in quality control processes for generating and adding subtitles to videos. Through automated algorithms, AI tools can detect and flag potential errors, inconsistencies, or timing issues in subtitles. Content creators and editors can then review and refine the subtitles, ensuring the highest possible quality before they are made available to viewers. AI can provide customizable options for viewers to adjust subtitle settings according to their preferences, such as font style, size, color, and background transparency.

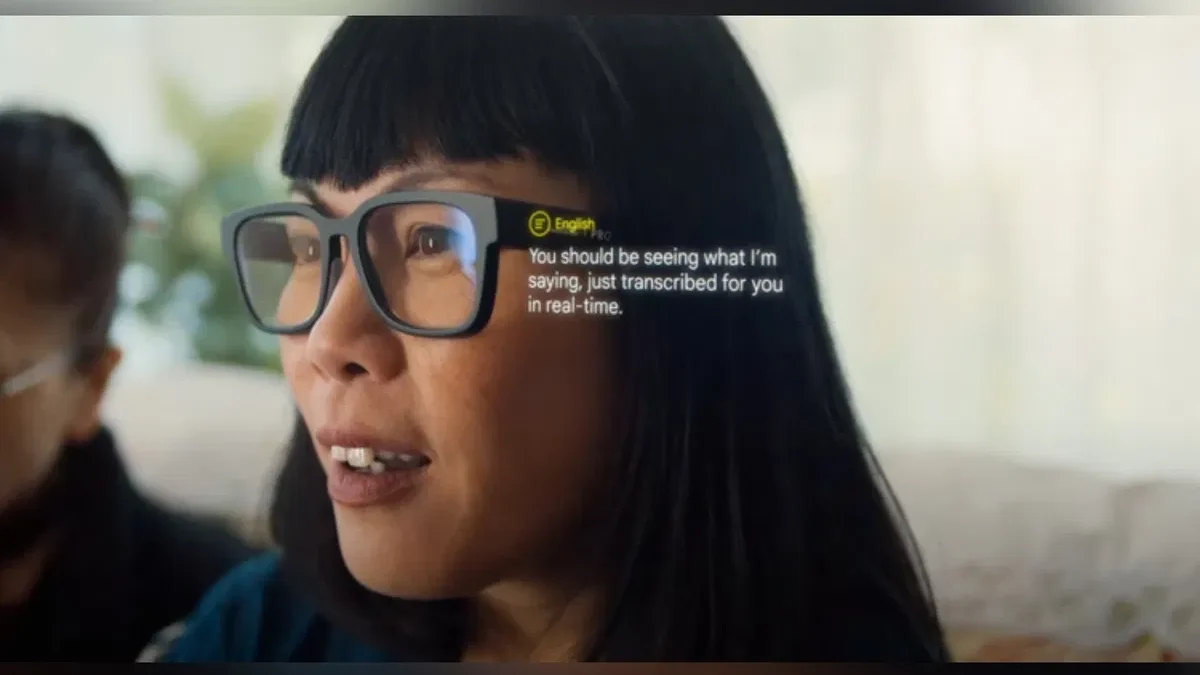

Augmented Reality and Wearable Subtitles

With the rise of augmented reality (AR) and wearable devices, subtitles could become more immersive and seamlessly integrated into our daily lives. AR glasses or headsets can overlay subtitles directly onto the viewer’s field of vision, eliminating the need for a separate screen. This technology would enable real-time translation or transcription for conversations, presentations, or even live events, revolutionizing cross-cultural communication.

Wearable subtitle devices, such as smartwatches or smart rings, could provide discreet subtitle displays that are always within the viewer’s reach. These devices would be especially beneficial for people with disabilities and in noisy environments or situations where audio cannot be used, allowing individuals to follow conversations or enjoy media content without disturbance.

Integration of Sign Language

Subtitles have traditionally been focused on text-based translations, but there is a growing need for accessibility for the deaf and hard of hearing communities. In the future, we can expect subtitles to integrate sign language elements, allowing viewers to have a more comprehensive understanding of the content. This could involve incorporating sign language videos alongside textual subtitles, making media more inclusive for sign language users.

More Engaging & Contextual Subtitles

AI subtitles do more than just transcribe words – they also provide context. Take the example of humor; without context, a joke can fall flat. With AI being equipped to understand nuances, the future of video content is likely to be more engaging.

Apart from enhancing the viewer’s experience, AI subtitles also cater to a larger audience. It can break down language barriers and cater to the hearing-impaired community, thereby making content more inclusive.

Embracing the Subtitling Future

The future of subtitles is equally exciting and positive, promising a world where content accessibility is no longer a challenge. These expected trends in AI subtitles could redefine content consumption – attracting larger audiences, retaining user engagement, and revolutionizing accessibility while also bringing the world closer. It is a future that media creators and consumers alike should look forward to embracing.